2011-04-07

The Internet Movie Database (IMDb) offers their Top 250 list of movies. The list consists of movies that have been received the highest rating from IMDb users. The ratings by which the list is sorted are weighted by the number of votes in a Bayesian manner. There is a minimum number of votes (3000) required for a movie to enter the list.

Arguably, the list enjoys some authority in determining what are the best movies of all times. Recently, however, some movies have soared high up in the list at release and then taken a nose-dive to lower ranks. Fearmongers note that this may be indicative of contamination of the list by movies that are not as much in the refined taste of movie experts, but have become hyped by general movie-going hordes, those that log onto IMDb after the movie to vote a 10/10. This may or may not be true. For Top 250, only votes from regular voters are considered. Yet, the definition of a regular voter remains undisclosed.

I wanted to get a more quantified look at the phenomenon, so I scraped historical data from the Internet Archive and from weekly IMDb update diff-files offered by IMDb via File Transfer Protocol (FTP), and analyzed what had happened on the top 250 list over time.

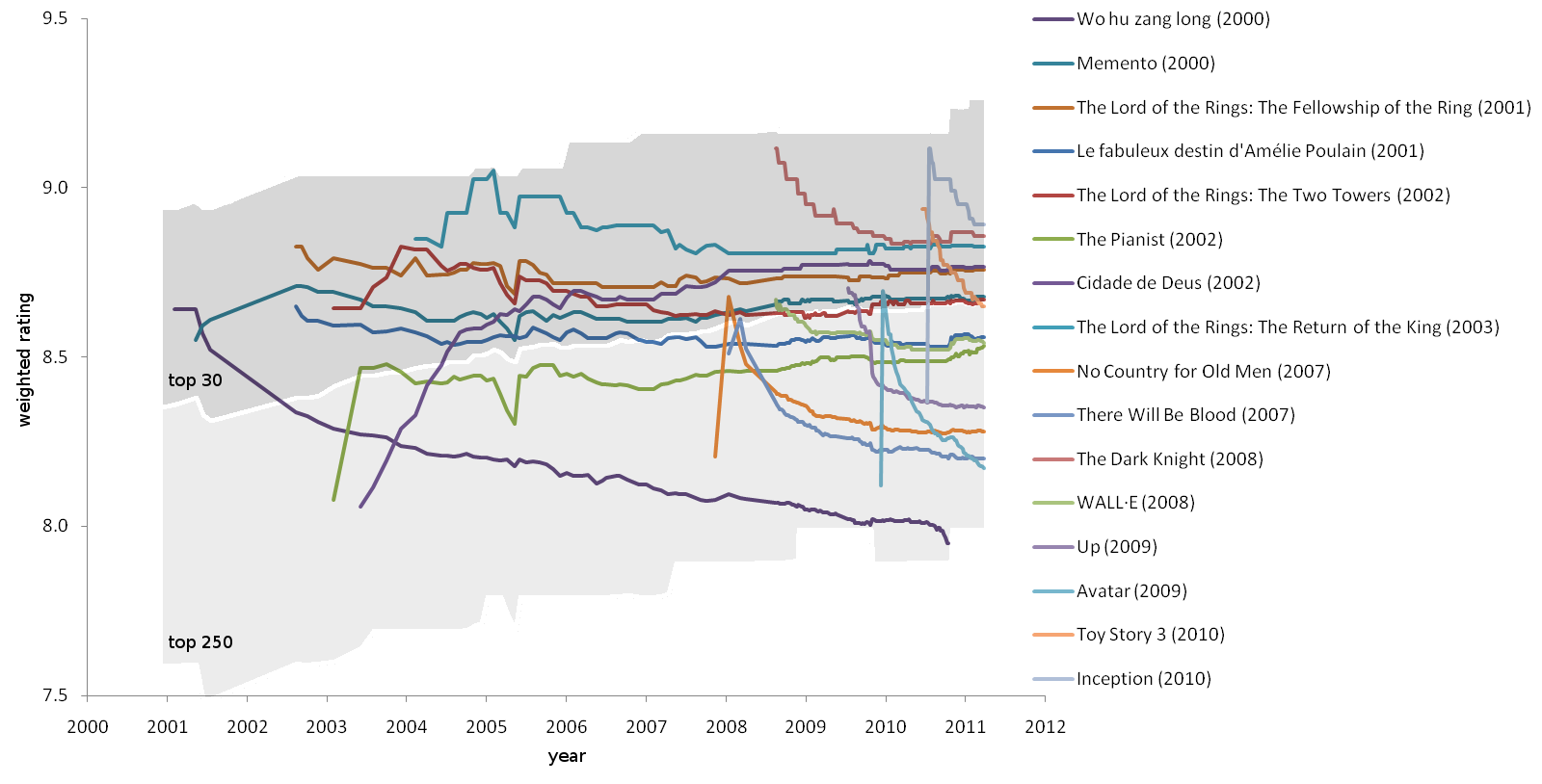

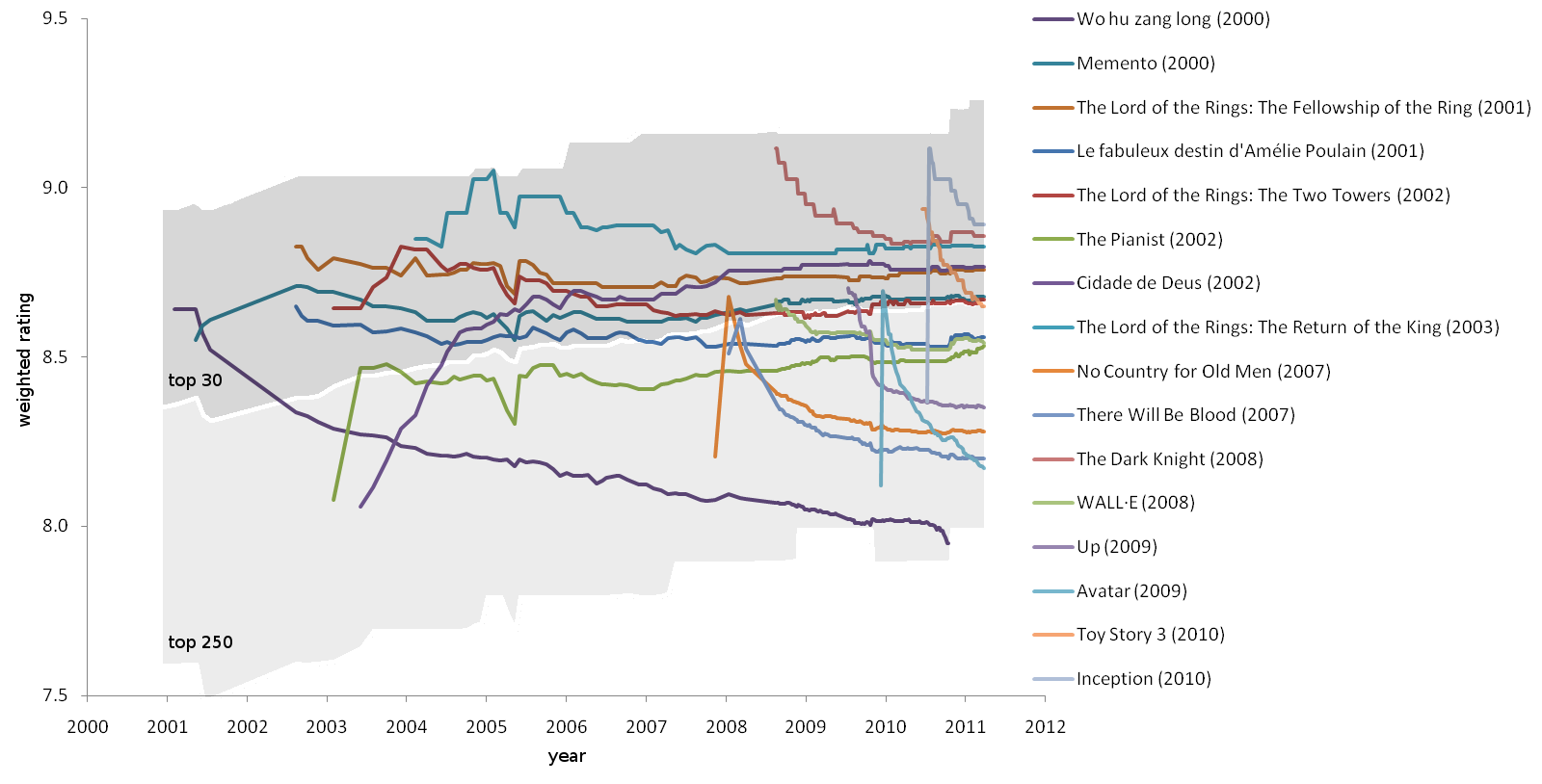

Apparently, the list has a life of its own. Of the 16 movies that had reached top 30 and had been released within the decade of data, 9 showed distinct peaking in their rating initially, but lost at least 0.2 points and often about 0.4 points over a year (Fig. 1). These movies were Wo hu zang long (2000), No Country for Old Men (2007), There Will Be Blood (2007), The Dark Knight (2008), WALL·E (2008), Up (2009), Avatar (2009), Toy Story 3 (2010), and Inception (2010). A bit of psychology may explain the finding. People who think they are going to like a movie go for the premier, or at least try to see the movie in the theater. Others will have less incentive to hurry. Often enough, people know what they will like, and because most votes are probably cast soon after seeing a movie, we get high votes first and lower ones later. This must especially be true for highly anticipated and aggressively marketed movies.

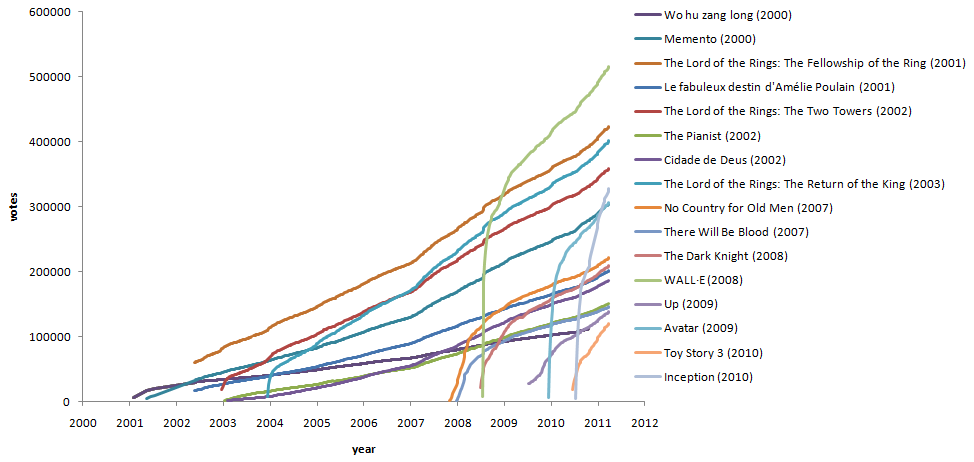

The accumulation of votes (Fig. 2) shows that for many of the high-ranking movies, as many votes were cast during the first half a year as during the following 2 years. Still, for any given movie, there will always be people who have not yet seen it, and the votes continue to accumulate. A significant proportion of people watch a movie soon after the release rather than later at at a random time. This is related to hype and buzz surrounding new movies. Votes will be influenced by social interactions. The temporal nature of something being fashionable would make this visible on the time-line of movie rankings.

If a movie is novel in technology or style, it may receive a high rating that decreases by time. Wow-factors don't age well. Wo hu zang long (2000) (Crouching Tiger, Hidden Dragon), for the wider western audiences the first example of wuxia cinema, started well within top 30, but got kicked out of even the top 250 by late 2010. Avatar (2009), the first high-budget 3D movie, displays the steepest drop in ratings yet and is likely to exit top 250 within a couple of years.

To summarize, IMDb Top 250 faces at least two problems. The first is that, in short term, votes are negatively correlated with time elapsed since the release of a movie, biasing the rating upwards initially. Therefore, new movies on the list should not be trusted to be as good as claimed. The second is that a movie's rating is not an objective, fixed quantity, but changes by time. There's really nothing to do about this. Evaluation of art will by definition be subjective. As a third problem, the number of aggressive voters vs. conservative voters may have increased over time as Internet and IMDb have become main-stream. While this is debatable, IMDb has from early on started to counteract sporadic and aggressive voting by only considering votes from regular voters for Top 250.

Despite the shortcomings, I think IMDb Top 250 is the best "best movies" list available, on the condition that movies can be valued by viewer satisfaction. IMDb could perhaps construct a statistical model of time-dependent voting behavior to find a more stable way to predict the expected value of votes, but the use of too many statistical tricks might jeopardize clarity and credibility of the list, and might bring about errors of its own.

| Wo hu zang long (2000)] |